Introduction: The 20-Watt Supercomputer

Modern artificial intelligence has reached a resource-intensive paradox. While large language models display unprecedented capabilities, they are tethered to immense, thirsty data centers that consume megawatts of electricity and require massive cooling towers to dissipate the heat of their labor. In stark contrast, the human brain—the most sophisticated information processor in the known universe—performs vastly more complex reasoning and perception on approximately 20 watts of power. That is roughly the energy required to run a dim household lightbulb.

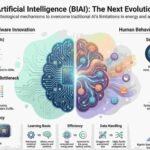

As a strategist at the intersection of neuroscience and computer engineering, I see this not just as an efficiency gap, but as a strategic inflection point. We are moving from an era of brute-force computation—defined by massive GPU clusters and static datasets—to an era of elegant, physics-based efficiency. This is the promise of Brain-Inspired Artificial Intelligence (BIAI) and neuromorphic computing. By translating the biological principles of neural architecture into silicon, we are not merely building better tools; we are rewriting the fundamental laws of how machines “think.”

——————————————————————————–

1. Spikes, Not Scalars: The Power of Sparse Communication

Traditional Artificial Neural Networks (ANNs) communicate through “rate encoding,” passing continuous real numbers (scalars) between layers. This requires constant, power-hungry mathematical processing, even when the input data is stagnant. Brain-inspired systems, specifically Spiking Neural Networks (SNNs), operate on a more radical principle: sparse, binary signals known as “spikes.”

In an SNN, a neuron remains dormant until its internal “membrane potential” reaches a critical threshold. This event-driven nature allows hardware to save immense energy by working only when absolutely necessary, mirroring the “sparse” activity of the human cortex.

“The sparsity of the synaptic spiking inputs and the corresponding event-driven nature of neural processing can be leveraged by hardware implementations that have demonstrated significant energy reductions as compared to conventional Artificial Neural Networks (ANNs).” — Jang et al.

By encoding information in the precise timing of these events, SNNs reduce the energy cost of a single operation to as low as a few picojoules. This shift from constant calculation to event-triggered communication is the first step in dismantling the unsustainable energy overhead of modern AI.

——————————————————————————–

2. Breaking the Von Neumann Bottleneck with In-Memory Computing

The fundamental flaw in classical computing is the “Von Neumann bottleneck”—the physical separation between the processor and the memory. In standard AI tasks, the energy spent ferrying data back and forth between these two components far exceeds the energy used for the actual computation.

Neuromorphic architecture solves this by co-locating memory and processing, mirroring the brain’s regions where storage and learning happen in the same physical circuits. However, as an engineer, it is vital to distinguish between two approaches: “near-memory” computing, like IBM’s digital NorthPole chip where memory is tightly intertwined with the compute cores, and “in-memory” computing, found in analog chips like Hermes, where computation happens exactly where the data lives.

The Strategic Benefits of In-Memory Architecture:

- Zero Data Shuffle: Computation occurs within the memory array, eliminating the energy-heavy transport of synaptic weights.

- Ultrafast Latency: Eliminates the wait times associated with retrieving data from external RAM.

- Massive Parallelism: Enables thousands of cores to operate simultaneously without competing for a single memory bus.

The results are a direct threat to the GPU-centric “Old Guard.” For specific inference tasks, the NorthPole chip has demonstrated performance 46.9 times faster and 72.7 times more energy-efficient than state-of-the-art GPUs.

——————————————————————————–

3. The Three-Factor Rule and the Death of Global Backpropagation

Standard deep learning relies on “backpropagation,” a global mathematical update that is increasingly difficult to implement in complex, recurrent topologies. Backprop is a “black box” that lacks biological plausibility and requires a model to be “frozen” after training. For AI at the edge—think autonomous robots or prosthetics—this is a fatal limitation.

We are moving toward the Three-Factor Rule, a biologically inspired local learning mechanism. In this framework, synaptic weights are updated based on three variables:

- A global learning signal (representing a reward or error).

- Pre-synaptic activity (the “input” neuron).

- Post-synaptic activity (the “output” neuron).

This aligns with Hebb’s hypothesis: “neurons that spike together, wire together.” By making updates local, the system achieves autonomous learning. A robot wouldn’t need to “call home” to a central server to adjust its movement; the learning happens in its “silicon muscles” in real-time. This paves the way for continuous, on-line adaptation that backpropagation simply cannot support.

——————————————————————————–

4. Why “Unlearning” is the Secret to Cognitive Efficiency

In our rush to build models that “know everything,” we often forget that for high-order intelligence, forgetting is not a bug; it is a feature. The human brain utilizes “synaptic pruning” to remove redundant or outdated connections, optimizing the limited physical space and energy of the cranium.

“Machine Unlearning” is the silicon equivalent of this pruning. It is the selective removal of a specific data point’s influence without requiring the model to be entirely retrained. This is a critical bridge between biological necessity and business reality.

“Modeling intelligence on biological systems can foster the creation of AI that is trustworthy and aligned with human values… This mechanism enables the brain to maintain cognitive efficiency by removing less useful or redundant information, thereby optimizing memory storage and retrieval.” — Ren and Xia

Strategically, unlearning allows for strict GDPR compliance (the “right to be forgotten”) and ensures model relevance by purging biased or outdated data. It transforms AI from a static library into a dynamic, adaptive memory system.

——————————————————————————–

5. The Analog Renaissance: Computing with Chalcogenide Glass

While we live in a digital world, the brain is essentially an analog processor. IBM Research is spearheading an “Analog Renaissance” through the Hermes chip, which utilizes Phase-Change Memory (PCM). These devices use chalcogenide glass—a material that transitions between amorphous and crystalline states—to store synaptic weights as a continuum of conductance, rather than binary 0s and 1s.

In this “physical computing” framework, we don’t calculate math; we let the laws of physics do the work:

- Ohm’s Law performs multiplication naturally as current passes through a resistor.

- Kirchhoff’s Law performs summation by naturally aggregating currents at a node.

However, a strategic limitation remains: these devices are currently optimized for inference, not training. This is due to the “durability” of PCM; the physical state of the glass cannot yet survive the trillions of conductance changes required during an intensive training phase. Nevertheless, for running massive models at the edge, analog physics offers a density and efficiency that digital logic can never match.

——————————————————————————–

Conclusion: Toward a Conscious Architecture

If we can master the physical architecture of the neuron and the local rules of the synapse, we aren’t just building better chips—we are laying the foundation for a “Conscious Architecture.” The ultimate goal is to move beyond tools that merely process data toward systems that possess a “Theory of Mind”—the ability to understand, predict, and empathize with human intentions.

By mimicking both the physical efficiency and the behavioral adaptability of the human brain, we are closing the gap between machine and mind. This leads us to a final, provocative question for the next generation of strategists:

If we successfully build a machine that mimics the physical and behavioral architecture of the human brain, at what point does it cease to be a “tool” and begin to be a “peer”?

Discover more from TechResider Submit AI Tool

Subscribe to get the latest posts sent to your email.