Nano Banana 2 Google AI Image Model

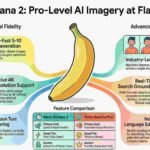

Nano Banana 2 is Google’s advanced AI image generator and editor, powered by Gemini, excelling in fast generation, precise edits, and text rendering

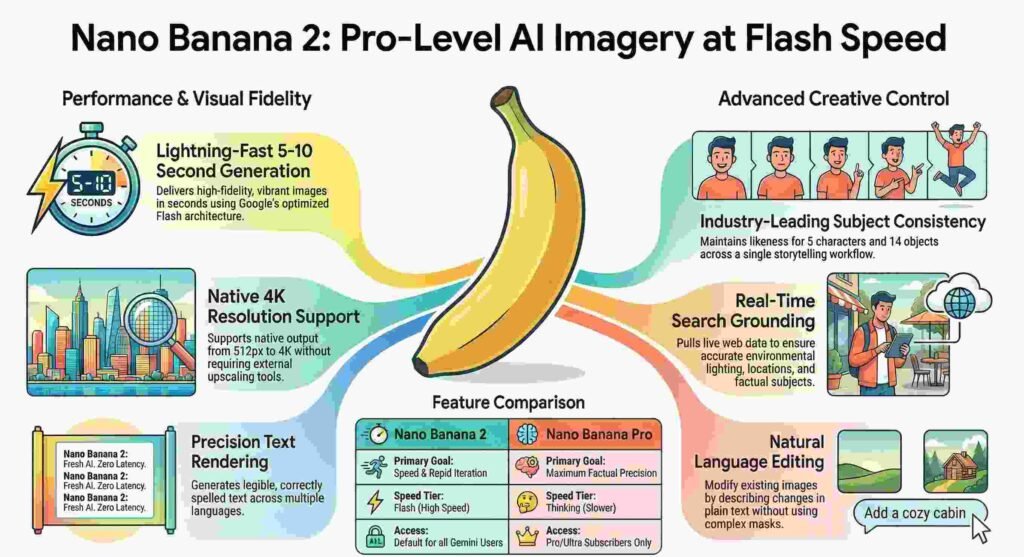

On February 26, 2026, Google effectively dissolved this boundary with the release of Nano Banana 2 (officially Gemini 3.1 Flash Image). By merging high-speed architecture with sophisticated reasoning, Google has delivered the industry’s first “unambiguous winner” that offers Pro-level quality at Flash speed.

1. The “Death of Gibberish”: Typography That Finally Works

The holy grail of AI art has always been legible text; with Nano Banana 2, Google just claimed the prize. The model moves past the era of “wobbly characters” by implementing character-by-character validation to ensure text is correctly spelled and rendered. This isn’t just about legibility; the model now supports real-time translation and localization within the image, allowing global brands to generate multi-language assets in a single workflow.

This advancement is a massive shift for marketers and designers working on billboards, greeting cards, and product packaging. Previously, these professionals had to fix AI “hallucinations” in post-production, but Nano Banana 2 delivers production-ready typography the first time.

“Introducing Nano Banana 2, our best image model yet… The precision is mind blowing,” stated Google CEO Sundar Pichai.

2. Narrative Control: Subject Consistency Across the Storyboard

One of the most profound features is the new Subject Consistency engine, which allows creators to lock in the likeness of up to five characters and maintain the fidelity of 14 distinct objects. This is the “director’s mode” for AI art, enabling creators to build cohesive visual narratives without identities shifting between frames. In a gold-standard farm scene demo, the model maintained the exact spots on a cow and the specific texture of a tractor across ten different camera angles.

For visual storytellers and storyboard artists, this solves the “identity drift” problem that has plagued AI generation since its inception. You can now build a 10-frame sequence where the protagonist’s facial structure remains 100% accurate, allowing for a level of continuity that was previously impossible. This moves the medium from random experimentation to reliable, professional visual production.

3. Real-Time Awareness: Grounding Images in the Living Web

Nano Banana 2 isn’t just a static database; it uses Gemini’s Search Grounding to pull real-time data to inform environmental conditions. The standout “Window Seat” demo showcases this by pulling live local weather data to influence the lighting and atmosphere of a 4K render. If you prompt for a “morning in Seattle,” the model checks the actual local weather to decide if the light should be a crisp blue or a diffused, rainy gray.

This world-knowledge integration ensures that images aren’t just aesthetically pleasing but contextually accurate. However, as an expert reviewer, I still advise a layer of healthy skepticism; while the model is powerful for infographics, users should verify data-critical outputs, as weather dates can occasionally lag. For most creators, though, the ability to have live data dictate “Tyndall effects” and volumetric lighting is a paradigm shift.

4. Peeking into the Brain: The “Thinking” Process Revealed

In a move toward unprecedented transparency, Google has introduced a “Thinking” mode via the include_thoughts=True parameter. Rather than operating as a black box, the model now reveals its internal reasoning regarding composition, spatial relationships, and instruction following before it renders the final pixels. This allows users to see exactly how the model interpreted complex, multi-layered prompts before the generation is complete.

This transparency is more than just a novelty; it is a surgical tool for refinement. By understanding the model’s reasoning, users can adjust their prompts based on where the model’s “logic” diverged from their vision.

As one developer source noted: “It’s like having a conversation with your artist!”

5. Pro Accessibility: High-Fidelity Specs for the Masses

The most significant lineup change is that Nano Banana 2 now replaces Nano Banana Pro as the default engine across the Gemini app. However, in a nod to power users, Google has ensured that Pro/Ultra subscribers can still access the original Nano Banana Pro for “specialized tasks” via the three-dot menu. This update effectively makes 4K native-quality output—powered by the GemPix 2 Diffusion Renderer—the baseline for millions of users worldwide.

The model is now broadly available across the Google ecosystem, including Google Search (in 141 countries), Vertex AI, and specialized platforms like fal.ai and Atlas Cloud. Every output is protected by SynthID digital watermarking and C2PA Content Credentials, ensuring that these high-fidelity assets remain identifiable in the wild. By raising the “floor” of AI art, Google has ensured that professional textures and 4K specs are no longer exclusive luxuries.

Conclusion: The New Standard for Digital Creativity

Nano Banana 2 marks the definitive transition from AI as a toy to AI as a production-ready creative stack. By solving the trifecta of text precision, subject consistency, and real-time grounding, Google has effectively hit “zero friction” for high-end digital creation. As we move forward, the question is no longer whether AI can generate a usable image, but how the sheer volume of high-quality content will reshape our daily digital landscape.

NanoBanana2

AIGeneratedImages

GeminiAI

AIImageEditor

ImageGeneration

NanoBananaOverlays

AITextRendering

4KAIArt

GoogleGeminiFlash

AIEditingTools

Discover more from TechResider Submit AI Tool

Subscribe to get the latest posts sent to your email.