The Relatable Crisis of the “Dumb” AI

We have all been there. You are standing at the helm of a frontier model like GPT-5 or Claude 4, expecting a masterstroke of digital intelligence, only to receive a generic summary, a hallucinated citation, or an output that ignores your core constraints. In the “vibe-based” era of 2023, we treated these interactions like a slot machine, hoping a few “magic words” or a polite “please” would tip the scales.

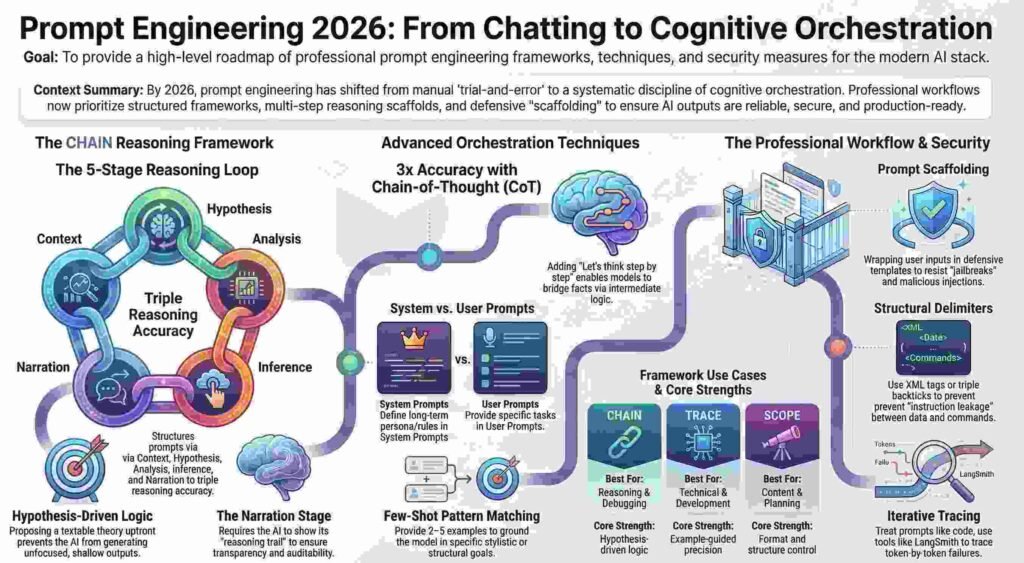

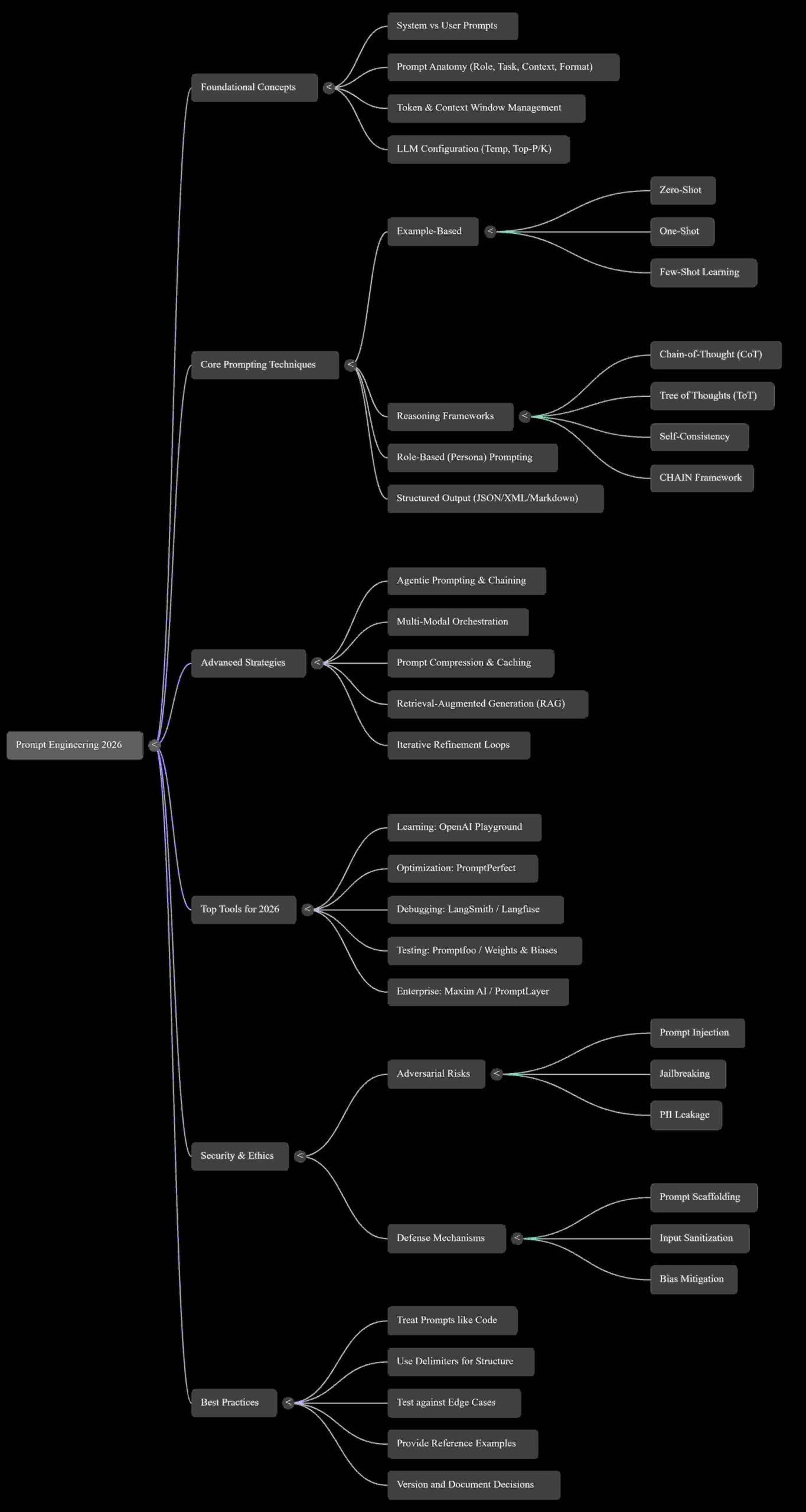

By 2026, that era is officially dead. As models have scaled to trillions of parameters and context windows have expanded into the millions of tokens, the complexity of our demands has increased exponentially. We are no longer just “talking” to a chatbot; we are engaged in the professional discipline of Agentic Orchestration. The central reality of the modern technical landscape is clear: AI doesn’t fail—unstructured prompting does.

1. From Guesswork to the “AI IDE”: The Professional Tooling Shift

The single biggest shift for AI strategists in 2026 is the transition from manual “tweaking” to systematic, professional-grade toolchains. We have moved from the “OpenAI Playground” phase into a world where we are debugging instructions rather than just editing text.

Prompt engineering now mirrors the software development lifecycle. Professional platforms like LangSmith, PromptLayer, and Langfuse serve as the “AI IDEs” of our era, allowing teams to version instructions, trace logic across complex chains, and monitor performance metrics like latency and token cost in real-time.

“Great AI results are rarely accidental.”

Treating prompts as “code” is the requirement for enterprise-grade reliability. This means implementing Git-style versioning, A/B testing variations, and collaborative review processes before a single instruction reaches production.

2. The “Hypothesis” Secret: Why CHAIN Trumps “Step-by-Step”

In 2022, adding “let’s think step-by-step” was a breakthrough. In 2026, high-stakes reasoning requires the CHAIN framework. While standard chain-of-thought methods tell the AI how to reason, CHAIN provides a structure for what to analyze.

The framework consists of five stages:

- Context: Delivering all background, data, and constraints to eliminate assumptions.

- Hypothesis: Proposing a specific, testable theory regarding the answer or cause.

- Analysis: Listing the sub-questions or dimensions the AI must examine.

- Inference: Connecting findings and testing the hypothesis against the evidence.

- Narration: Defining the output format and making the reasoning trail visible.

The innovation here is the Hypothesis stage—the “Scientific Method” applied to silicon. By giving the AI a focal point to confirm, refine, or refute, you prevent “unfocused surveys of possibilities” and triple accuracy in complex debugging.

Strategist’s Note: Modern models handle these stages differently. GPT-4o often attempts to compress the Analysis stage; you must use explicit sectioning to force depth. Conversely, Claude 4 excels at the Inference stage, frequently surfacing unexpected connections if you allow it to weigh conflicting data points.

3. The System Prompt as the “AI Constitution”

One of the most frequent mistakes is failing to distinguish between the System Prompt and the User Prompt. Effective architecture requires a “separation of concerns.” The System Prompt serves as the AI’s “constitution” or “job description,” while the User Prompt is the immediate task.

To prevent “instruction leakage”—where the model confuses data with commands—professional engineers use XML tags (e.g., <instructions></instructions>) or triple backticks as robust delimiters.

| Feature | System Prompt | User Prompt |

| Purpose | Defines identity, persona, long-term behavior, and guardrails. | Provides the specific task, question, or data for one interaction. |

| Stability | Static; updated only during core capability or brand refinements. | Dynamic; changes with every single user request. |

| Enforcement | Technically enforced via delimiters (XML, """) to prevent leakage. | Volatile; the primary vector for user-driven task execution. |

4. The Complexity Trap: Breaking the “Single Prompt” Habit

A common pitfall is the attempt to bundle an entire workflow into one massive request. When you ask a model to classify an email, extract data, update a database, and draft a response simultaneously, its reasoning capabilities—and your Context Window Management—degrade.

Reliable AI requires a modular “Better Prompting Workflow”:

- Draft: Start with clear instructions using a high-reasoning model.

- Constraints: Explicitly define output formats and context boundaries.

- Stress Test: Ask the model, “How might these instructions be misunderstood?”

- Iterate: Break complex tasks into single-purpose, modular steps.

- Deploy and Monitor: Track performance in production via Langfuse to watch for latency and cost.

“Reliable AI requires clear communication. Sloppy prompts result in wasted time and unreliable data.”

5. Prompting as a Security Frontier: The Gandalf Lesson

As AI moves into the enterprise core, the line between “aligned” and “adversarial” behavior has become razor-thin. Research from platforms like Gandalf reveals that model guardrails are often bypassed not by hacking, but by clever reframing.

Attackers now use “progressive extraction” to rebuild sensitive data through partial reveals or exploit multilingual blind spots where safety filters are weaker in non-English inputs. More sophisticated “obfuscated prompts” use ASCII decimal codes or hex to slip malicious commands past traditional text filters.

To combat this, Prompt Scaffolding is no longer optional. This involves wrapping user inputs in structured, guarded templates that force the model to evaluate the safety of a request before executing it. We are no longer just asking questions; we are building defensive reasoning layers.

Conclusion: The Future is Conversational Logic

By 2027, the digital economy will value the ability to articulate complex thoughts into structured instructions as its most valuable skill. We have moved beyond “magic words” and into the era of conversational logic. The ability to design with context—leveraging RAG and structured reasoning—is what separates a casual user from an AI architect.

Discover more from TechResider Submit AI Tool

Subscribe to get the latest posts sent to your email.