Introduction: The Quiet Migration to the Edge

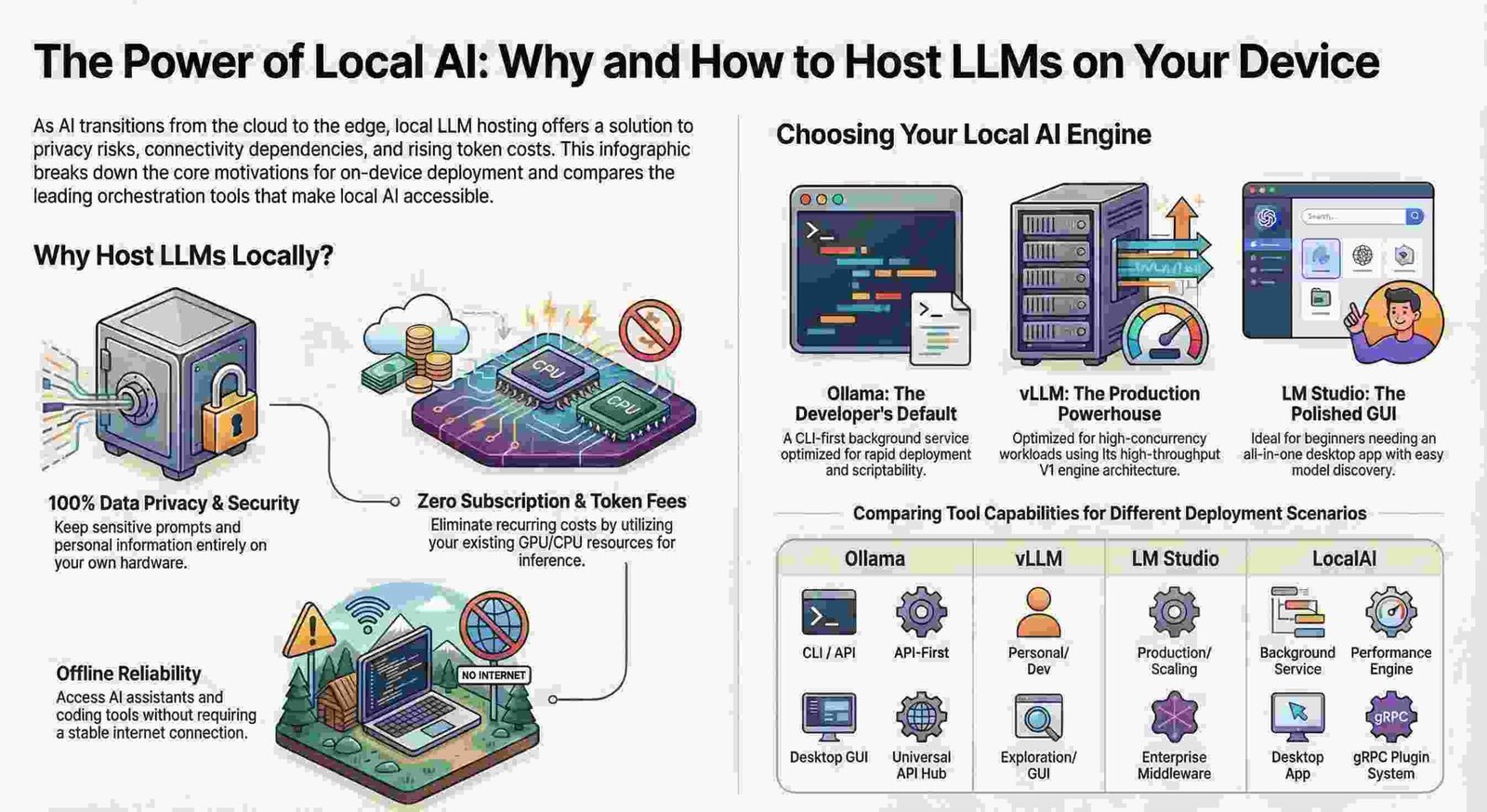

For years, the promise of Large Language Models (LLMs) was locked behind the “black box” of the cloud. To access high-level reasoning, we traded our privacy and tethered ourselves to stable internet connections, often enduring the “hallucination-prone” whims of remote servers. But the persistent ghost in the machine of local AI—the belief that “local” meant “compromised”—has finally been exorcised.

As we move through 2026, we are witnessing a quiet but total migration. Local LLMs have graduated from a niche hobby for hardware enthusiasts into essential, “local-by-default” infrastructure. This isn’t just a change in the location of our compute; it is a fundamental re-architecting of the relationship between data, hardware, and intelligence.

——————————————————————————–

Your Device’s Memory is Living, Breathing, and “Elastic”

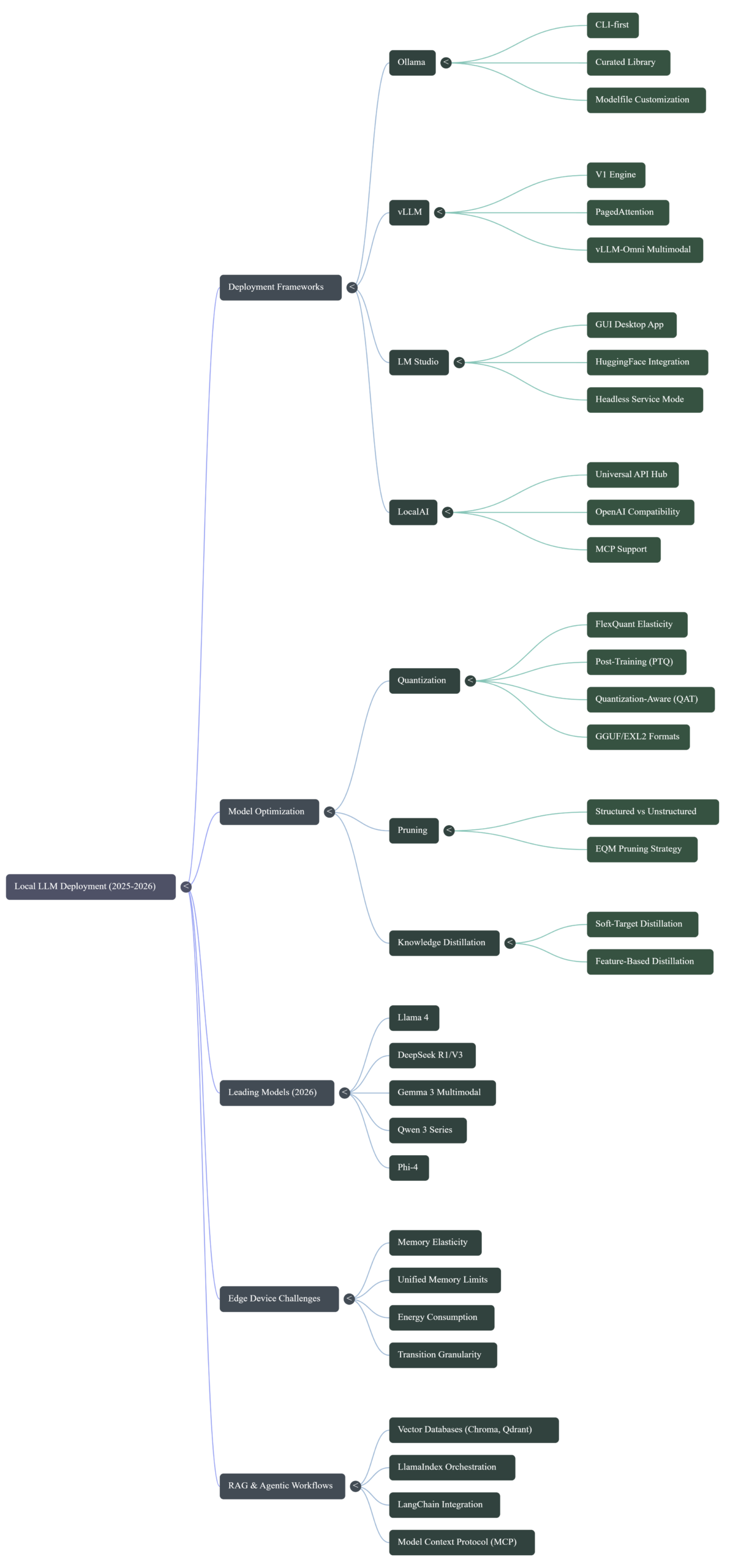

The biggest hurdle for edge AI has always been the rigid nature of model hosting. On modern System-on-Chips (SoCs) using unified memory architectures—where RAM is a shared pool between the OS, the browser, and background tasks—hosting a static, fixed-size LLM is a recipe for system crashes.

Harvard’s “FlexQuant” research has effectively solved this with Memory Elasticity. Instead of a model acting like a lead weight in your RAM, the Elastic Quantization Model (EQM) allows the model to “breathe” alongside your other apps.

“Most apps on mobile devices use around 100MB of memory or less. Therefore, an efficient elasticity hosting method should provide at least 100MB of memory granularity to avoid system interference.”

By achieving 15x better transition granularity and a 10x reduction in storage costs compared to previous methods, FlexQuant enables a seamless user experience. You can keep a massive 70B model “warm” in the background, and as you open a memory-hungry browser tab, the model instantly shrinks its footprint by swapping modules to a lower bit-width—maintaining functionality without a single stutter.

Analyst’s Take: We are moving from a world of “Can it run?” to a world of “How elegantly does it share?” Memory is no longer a static resource; it’s a conversation.

——————————————————————————–

The Great Tooling Divorce (Infrastructure vs. Interface)

The local AI ecosystem has matured into a two-layer stack, ending the era of “one tool to rule them all.” We are seeing a strategic split between the background engines that do the heavy lifting and the polished interfaces that manage the user experience.

- The Infrastructure Layer (Ollama, vLLM): These are the background powerhouses. Ollama, for instance, is an API-first, Docker-friendly service designed to be headless and scriptable. It provides the “muscles” for your system without cluttering your desktop.

- The Platform Layer (LM Studio, Jan): This is where the “Interface” evolution is happening. Tools like Jan have shifted toward agentic workflows, introducing the “Browser MCP” (Model Context Protocol). This allows a local model to not just chat, but to automate browser-based tasks and interact with your local environment as a true digital agent.

| Use Case | Winner | Reason |

| App Developers | Ollama / vLLM | API-first, Docker-ready, always-on background service. |

| Power Users | LM Studio / Jan | Polished GUI, model discovery, and agentic “Browser MCP” tools. |

Analyst’s Take: Specialization is the ultimate sign of a maturing market. By separating the engine from the steering wheel, developers can now build more stable, agent-driven applications.

——————————————————————————–

vLLM V1 and the Dawn of “Omni-Modality”

The most significant shift in production-grade serving is the release of vLLM v0.11.0, which fully removed the aging V0 architecture. This V1 engine is a fundamental re-architecture that targets the highest tier of consumer and enterprise hardware, specifically optimized for NVIDIA’s Blackwell architecture (RTX 5090).

But the real “Surprising Shift” is vLLM-Omni. We have moved beyond the text-only chatbot. By integrating Diffusion Transformers (DiT) alongside autoregressive reasoning, vLLM-Omni enables a single unified framework to handle:

- Text-to-Image and Image-to-Text reasoning.

- Real-time audio transcription and translation.

- Video generation and complex visual reasoning.

Analyst’s Take: vLLM-Omni is the realization of the “Local OpenAI” dream. It allows developers to deploy a single multimodal stack that handles the entire spectrum of human input without ever touching the cloud.

——————————————————————————–

Quantization is No Longer a “Quality vs. Size” Zero-Sum Game

Quantization—the art of squeezing FP32 precision into INT8 or INT4—has reached a level of sophistication where “smaller” no longer means “dumber.” Using Quantization-Aware Training (QAT) and advanced Pruning strategies, models can now find the “Pareto Frontier,” the perfect equilibrium between footprint and accuracy.

“A quantized LLM does not experience significant loss of accuracy if a module is replaced with a slightly lower bit-width counterpart.”

The FlexQuant research highlights a critical threshold: models are incredibly robust to pruning up to a point. While you can significantly reduce a model’s weight, the research warns that stability and accuracy take a “significant hit” once you cross the 40% pruning rate.

Analyst’s Take: We have solved the math of “efficient intelligence.” As long as we stay within the 40% pruning guardrails, we can run larger, elite-level models on mid-tier hardware that was previously limited to “toy” versions.

——————————————————————————–

The “Death of Dependency Hell” with Llamafile

For the longest time, the barrier to local AI was the “Python tax”—a nightmare of CUDA drivers, environment variables, and dependency conflicts. Mozilla.ai’s revival of Llamafile has effectively ended this era by turning complex AI models into single, portable executable files.

Llamafile bundles the model weights and the runtime into one file that runs on virtually any hardware (CPU or GPU) and any major OS. This is a game-changer for:

- Offline Distribution: Handing over a fully functioning AI assistant on a single USB stick.

- Edge Deployment: Running advanced models in air-gapped or restricted environments where complex software stacks are forbidden.

- Server V2 Evolution: Recent updates have added product-level features like progress bars for prompt processing and improved OpenAI-compatible endpoints.

Analyst’s Take: Llamafile is the “just run it” solution the industry desperately needed. It has moved local AI from a technical hurdle to a portable utility.

——————————————————————————–

Conclusion: The 2026 Reality Check

The shift from “Cloud-First” to “Local-By-Default” is no longer a technical preference—it is a privacy mandate. With models like Llama 3.3, DeepSeek R1, and Phi-4, the performance gap has vanished. We have entered an era where your device’s intelligence is no longer contingent on a data center’s signal or a third party’s terms of service.

When your local device can think, reason, and see as well as the cloud—while keeping every byte of your data on-disk—why would you ever go back?

Discover more from TechResider Submit AI Tool

Subscribe to get the latest posts sent to your email.