In the hyper-accelerated digital landscape of 2026, viral trends often present as bursts of chaotic, near-mystical energy—a specific sound, a frantic stunt, or a singular dance move that saturates the collective consciousness in hours. To the uninitiated, virality looks like a lightning strike. However, as a digital culture strategist, I look past the “random” myth of the feed to the rigid systems beneath. Behind the perceived chaos lies a sophisticated network of algorithmic feedback loops and behavioral triggers designed to exploit information overload (Narayanan, 2023). In an age where we are constantly inundated with data, we have effectively outsourced our attention to a repeatable system. This exploration deconstructs the psychological, legal, and strategic frameworks that dictate online virality today.

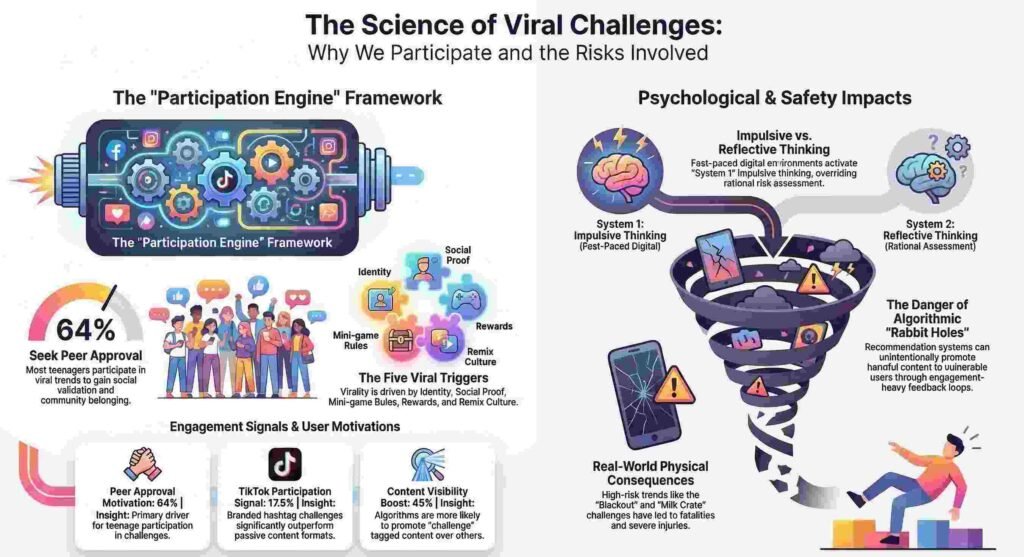

1. Virality is a Participation Engine, Not a View Count

The fundamental metric of success has shifted from “passive viewing” to “active copying.” In 2026, a video that merely gathers views is static; it is a dead end. To achieve true virality, content must function as a Participation Engine—a modular template designed for others to remix, mimic, and inhabit. This shift marks the transition from content consumption to identity building-blocks.

According to the “Viral Challenge Framework,” a trend only reaches escape velocity when it contains four essential components:

- One Simple Action: A low-friction dance move or “before/after” reveal that anyone can replicate in minutes.

- One Clear Rule: Constraints like “Do it in 10 seconds” or “Use this specific sound” that reduce the cognitive load of creativity.

- Built-in Payoff: A punchline, a “glow-up,” or a moment of social validation.

- Remix Space: A flexible architecture that allows users to personalize the content without breaking the core template.

Furthermore, 2026 data shows that interactive multipliers—such as polls, 360-degree experiences, and interactive overlays—act as high-octane fuel for this engine. These features ensure that users aren’t just watching; they are exploring and choice-replaying, which dramatically increases the participation signal.

“A viral challenge is not ‘a video that gets views.’ It’s a participation engine: content designed so people copy it, remix it, and tag friends to join. If your idea only works when your brand performs it, it’s not a challenge yet.” — AdSpyder Guide

2. The Algorithm is an “Author,” Not a Mirror

For decades, platforms hid behind the shield of Section 230 of the Communications Decency Act, claiming they were neutral repositories for user content. That era is over. The landmark legal shift in Anderson v. TikTok (2024), which relied heavily on the Supreme Court’s precedent in Moody v. NetChoice, has redefined recommendation algorithms—like the “For You Page” (FYP)—as “expressive activity.”

The courts now recognize that when an algorithm selects, prioritizes, and promotes a specific video to a minor using metadata (age, location, past interactions), it is exercising editorial judgment. It is not a passive host; it is an affirmative promoter. By curating an “expressive product,” platforms are essentially acting as authors of the user’s experience. This distinction is critical: if an algorithm targets a vulnerable user with a lethal trend like the “Blackout Challenge,” the platform may no longer be immune to liability. The algorithm doesn’t just show us what we like; it actively dictates the cultural conversation.

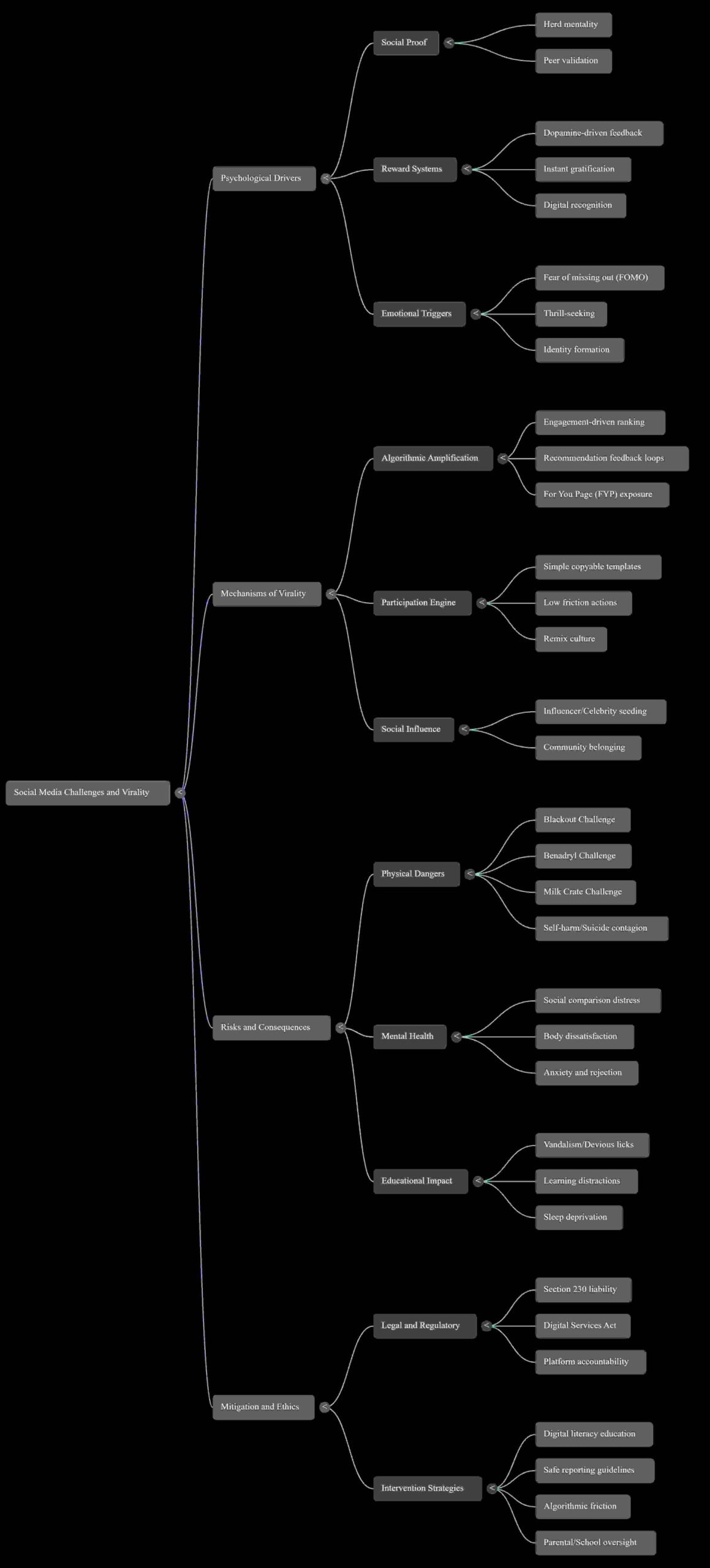

3. The “Chameleon Effect” and the Dopamine Feedback Loop

The decision to join a viral dare is rarely a rational one. It is an intersection of neurobiology and the “Chameleon Effect”—a cognitive bias where individuals mimic group behavior to signal belonging. In the digital sphere, this mimicry is a powerful “participation signal” used to navigate identity formation.

According to the Art and Society study (2025), a staggering 72% of young users participate in challenges specifically because they see friends or influencers doing the same. This behavior is reinforced by dopamine-driven gratification; every “like” or “share” triggers a neurochemical reward, creating a loop that encourages repeated engagement. For adolescents, whose brain regions linked to reward processing are hypersensitive, the Fear of Missing Out (FOMO) creates an emotional arousal that effectively overrides rational risk assessment. The impulse to remain socially relevant within the “social aggregate” often carries more weight than the physical risk of the challenge itself.

4. The Counter-Intuitive Truth About “Echo Chambers”

One of the most persistent myths of digital culture is that we are trapped in large, isolated echo chambers. In reality, recent research into social drivers reveals that online echo chambers are often smaller than our offline social circles. The danger, however, is not that we aren’t seeing opposing views—it’s that we are seeing the worst of them.

This creates a phenomenon of affective polarization. When algorithms expose us to the most extremist, toxic voices of the “other side” to drive engagement, it triggers intense partisan animosity. We aren’t being shielded from the opposition; we are being provoked by a distorted version of them. This leads to two distinct forms of behavioral contagion:

- The Werther Effect: The spread of harmful, maladaptive behaviors (like risky challenges or self-harm) through media exposure.

- The Papageno Effect: The potential for media to serve a positive role by promoting help-seeking behaviors and mental health literacy.

The current challenge for behavioral strategists is tilting the scale from the contagion of the Werther Effect toward the constructive influence of the Papageno Effect.

5. Why the Future of Safety Requires Structural “Friction”

We have moved from “Social Media” (built on social links and friends) to “Algorithmic Media” (built on automated recommendation). In this new paradigm, the platform’s power to determine content distribution is absolute. As Munger (2020) noted, the immersive nature and unpredictable virality of these recommendation engines make traditional safety measures obsolete.

The solution is the intentional design of “Friction.” To prevent impulsive engagement with dangerous dares—such as the “Milk Crate” or “Benadryl” challenges—platforms must prioritize long-term well-being over short-term engagement metrics. “Ethical Design” in 2026 involves:

- Undo Prompts: Forcing a moment of reflection before a user posts a hateful or high-risk comment.

- Humanizing Prompts: Algorithmically encouraging perspective-taking to reduce affective polarization.

- Time Gaps: Implementing structural delays before allowing the sharing of content flagged for high “social contagion” risk.

“A platform’s algorithm that reflects ‘editorial judgments’ about ‘compiling the third-party speech it wants in the way it wants’ is the platform’s own ‘expressive product.’ Therefore, it is protected by the First Amendment.” — Supreme Court, Moody v. NetChoice LLC.

From Clicks to Consciousness

We are witnessing a monumental shift toward digital literacy and platform accountability. The science of virality has proven that trends are not random accidents; they are the output of participation engines fueled by neurochemistry and accelerated by “expressive” algorithms acting as editors.

The stakes of this system are increasingly physical. The Omega Law Group 2025 study linked over 100 deaths and tens of thousands of emergency room visits to viral dares, underscoring that the current engagement-at-all-costs model is unsustainable. As we move forward, the question is no longer just how to go viral, but at what cost.

Discover more from TechResider Submit AI Tool

Subscribe to get the latest posts sent to your email.